Integrating Radar Sensors with AI Agents Using MCP

For Physical AI to operate in the real world, environmental perception—powered by sensors such as cameras, radar, and LiDAR—is essential.

But one question remains. Is having sensors enough to make Physical AI work? There is a fundamental difference between “having sensors” and AI actually understanding them.

Today, most sensors output data through their own proprietary protocols. For AI to make use of that data, someone has to bridge the gap—implement drivers, interpret protocols, and build monitoring tools.

In other words, there is an invisible wall between sensors and AI.

The Reality of Sensor-Based Development

This gap is experienced equally by both sensor developers and system integrators. The development loop for sensor-based systems typically looks like this:

Firmware modification → Build → Flash to sensor → Parameter tuning → Data collection → Analysis.

Each cycle can take anywhere from tens of minutes to several hours. As a result, development speed is often determined by how many iterations can be completed in a day.

Integration isn’t much easier. Engineers must read datasheets, understand communication protocols, write custom drivers, and even build their own testing tools. It’s not uncommon for integrating a single sensor to take weeks.

Meanwhile, AI agents in 2026 have reached a level where they can automate complex workflows. That led to a simple thought:

What if sensor interaction could also be automated?

Connecting Sensors to MCP

MCP (Model Context Protocol) is an open standard that allows AI agents to use external tools.

You can think of it as a universal interface that connects AI to the outside world—whether it’s reading files, querying databases, or calling APIs.

Sensors are, in essence, just another type of external tool.

So connecting them through MCP felt like a natural step. We decided to test this idea using a short-range radar sensor currently under development.

We exposed the sensor’s capabilities—status checks, detection data, parameter tuning, firmware build and flash, and data logging—as MCP tools.

This allowed the AI agent to directly call and operate the sensor.

It Worked Better Than Expected

Once connected, the AI agent was able to:

- Monitor sensor status

- Read real-time tracking data (position, velocity, object size)

- Adjust detection parameters and immediately observe the results

- Build and flash firmware

- Verify whether the sensor rebooted correctly

Even more interesting was how natural the interaction became.

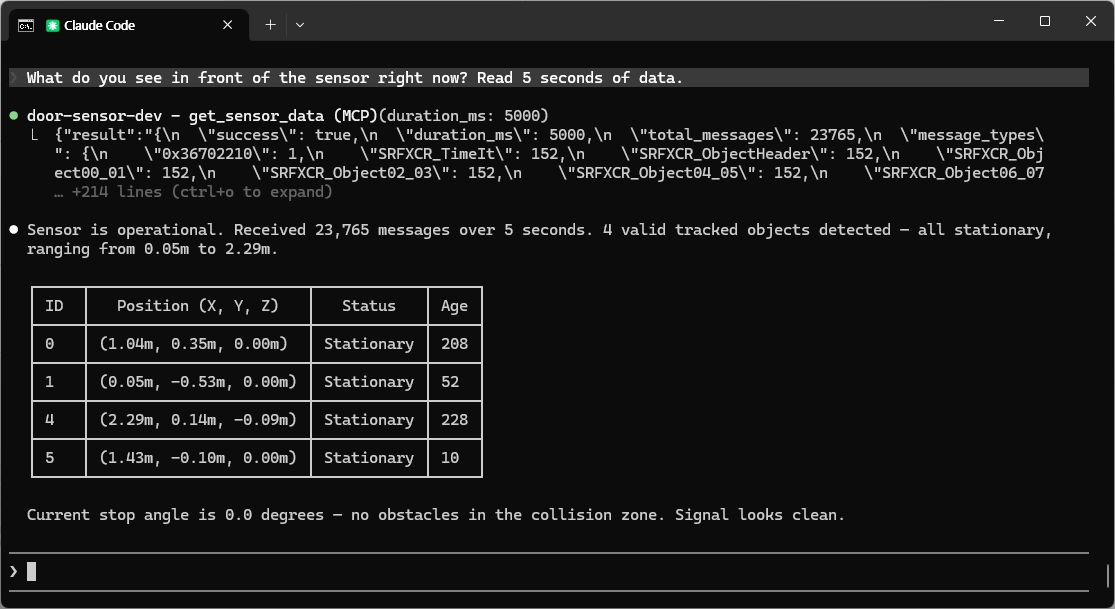

👨💻 Developer: What does the sensor see right now? Read data for 5 seconds.

🤖 AI Agent: Sensor is operating normally. Received 20,639 messages over 5 seconds. Detected 7 valid tracked objects, mostly stationary between 0.25–2.3m, and one new moving object at 0.1m. Current stop angle is 9.51°.

👨💻 Developer: There seems to be some noise. Increase the CFAR threshold and check again.

🤖 AI Agent: Updated cfar_threshold_r from 4.0 to 5.5. Re-measuring in 3 seconds... Detected objects reduced from 7 to 4. Near-range noise has been removed while stable objects remain. Would you like to keep this setting?

No protocol parsing.

No separate monitoring tools.

The developer simply gives instructions in natural language, and the agent operates the sensor and returns structured results.

What used to require multiple tools and manual steps is now handled through a single interaction.

Beyond a Development Tool

One realization quickly followed. Why should this interaction model stop at development?

The same pattern—checking status, reading data, adjusting parameters—remains relevant even after deployment.

For example:

- A robotics team could ask, “What do you see right now?”

- A smart infrastructure system could monitor movement in real time

- A research team could collect radar data through the same interface

The interface built for development becomes the interface used in operation. And that is more meaningful than it initially appears.

Rethinking Sensor Integration

This approach has the potential to fundamentally change how sensors are integrated.

Traditionally, integration requires:

- Reading datasheets

- Understanding communication protocols

- Writing drivers to parse binary data

Without domain expertise, it’s difficult to even get started. But if sensors expose an interface that AI agents can understand, the starting point shifts. From protocol manuals → to conversation.

Instead of decoding data formats, developers can simply ask:

- “What objects are being detected?”

- “Set detection range to 3 meters.”

- “Collect data for 10 seconds.”

The agent translates these requests into sensor operations and returns results. Not everything becomes simple overnight—but the barrier to entry becomes significantly lower.

The Real Barrier to Physical AI

Discussions around Physical AI often focus on algorithms and models. But in practice, one of the biggest hurdles is sensor integration. It requires specialized expertise and can take weeks just to establish connectivity.

If sensors can communicate directly with AI agents, that barrier changes. More teams can start building in robotics, autonomous systems, and smart infrastructure.

Still Early, But Directionally Clear

This is still an early-stage experiment.

We’ve only connected a single radar sensor to an AI agent. There is more to validate in multi-sensor environments and safety-critical systems.

But the direction is clear. Sensors are evolving from components that merely output data to systems that AI can understand and interact with.

If this shift helps close one of the missing pieces in Physical AI, it could become a meaningful step forward.

bitsensing | Radar Reimagined